See it in action.

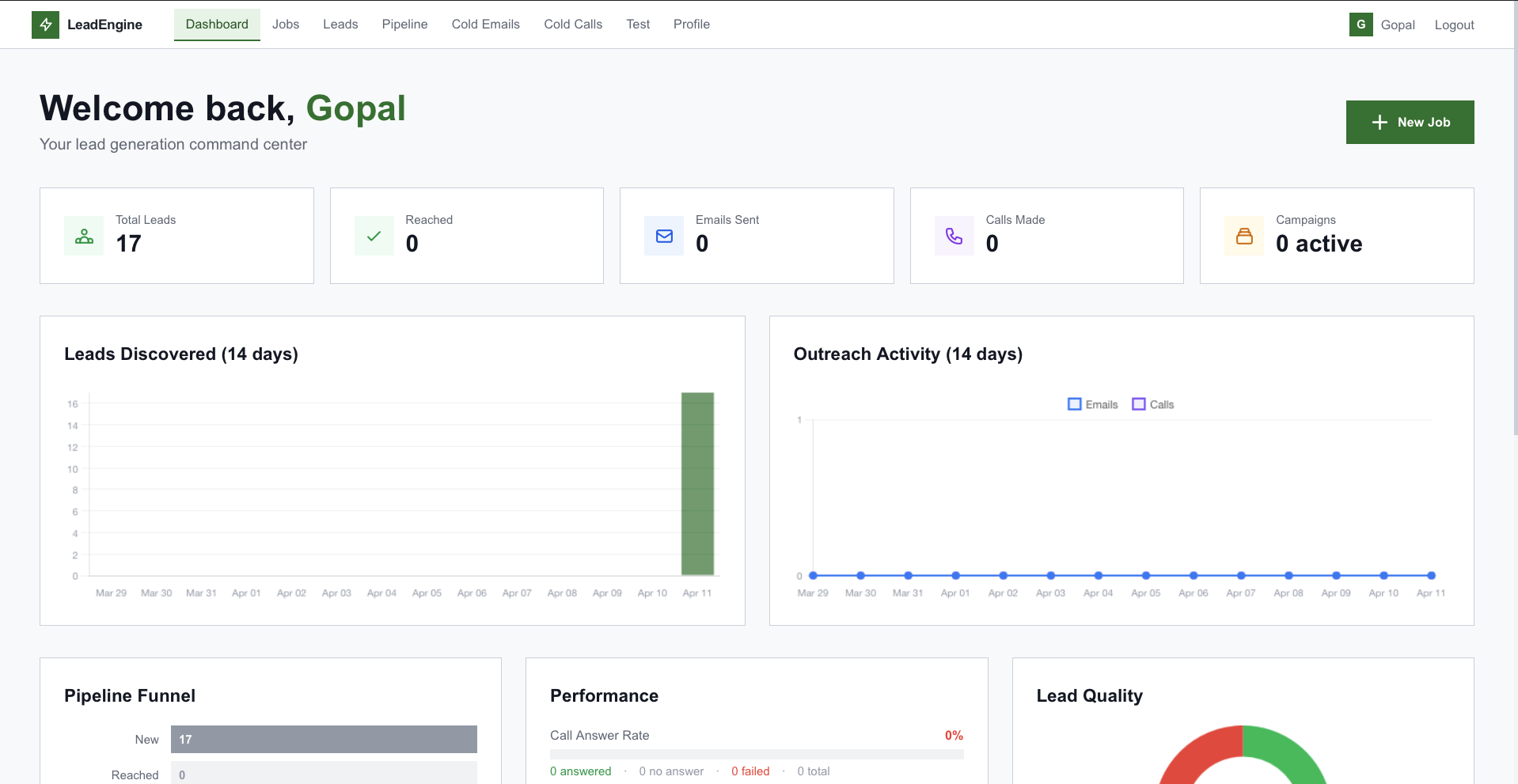

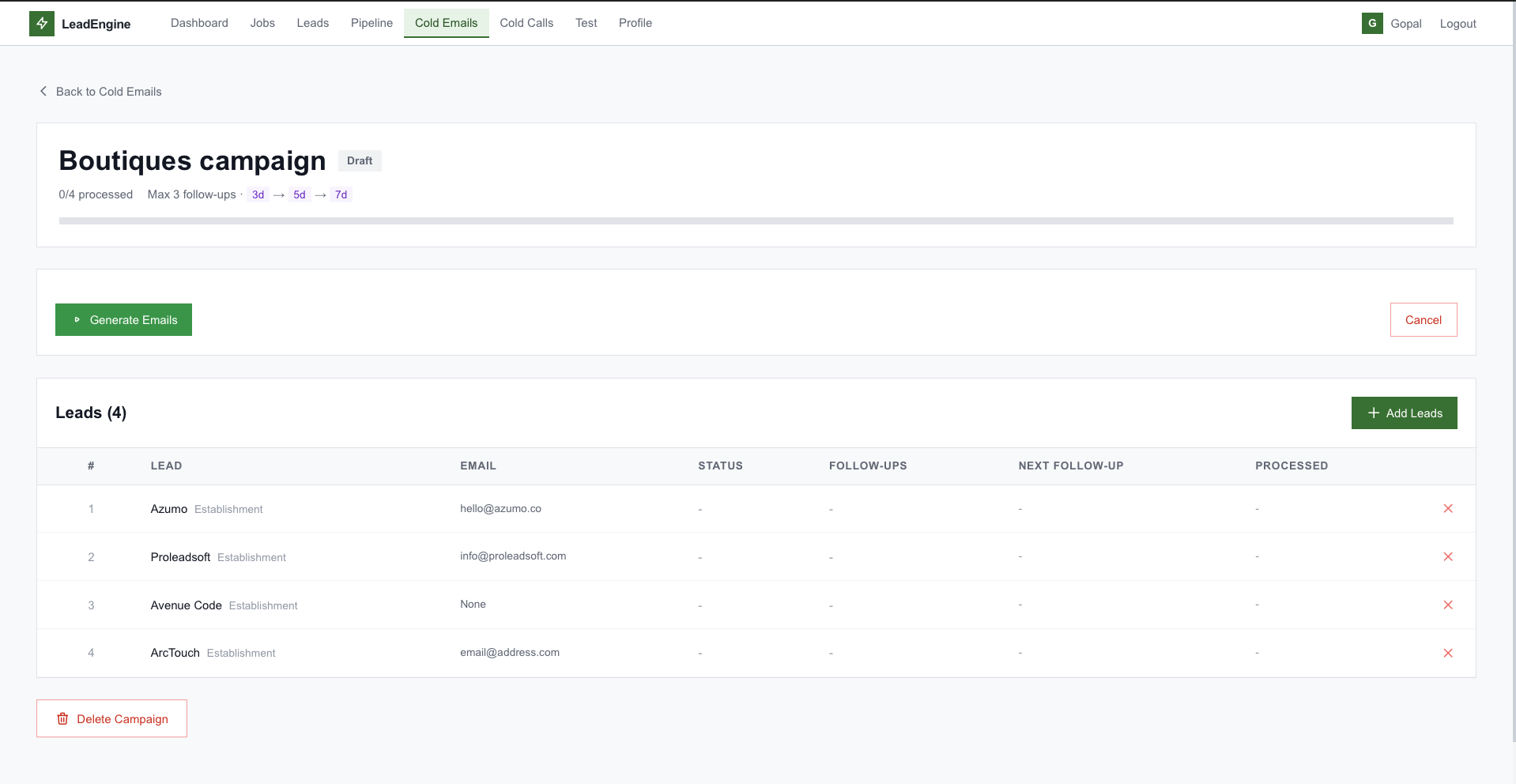

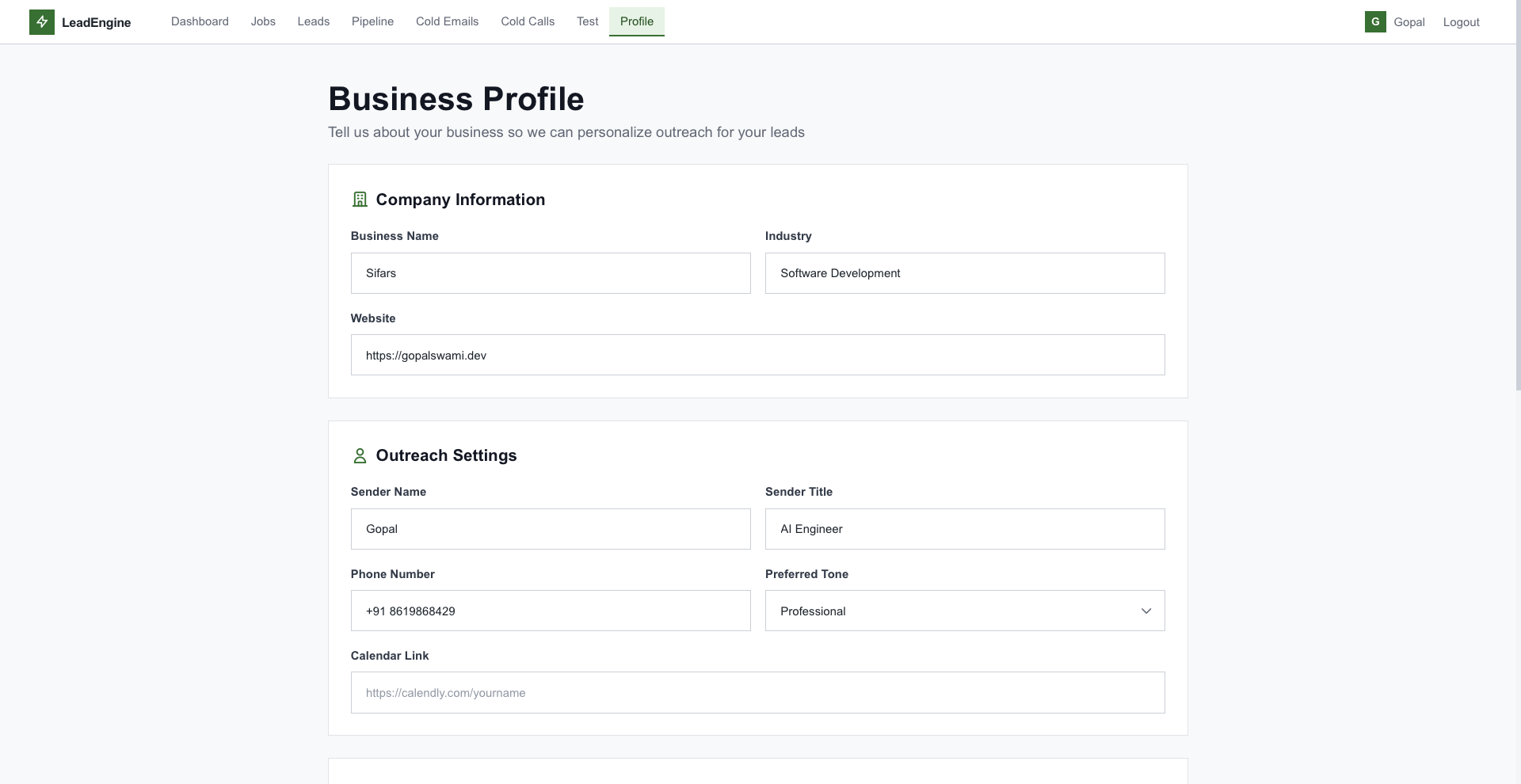

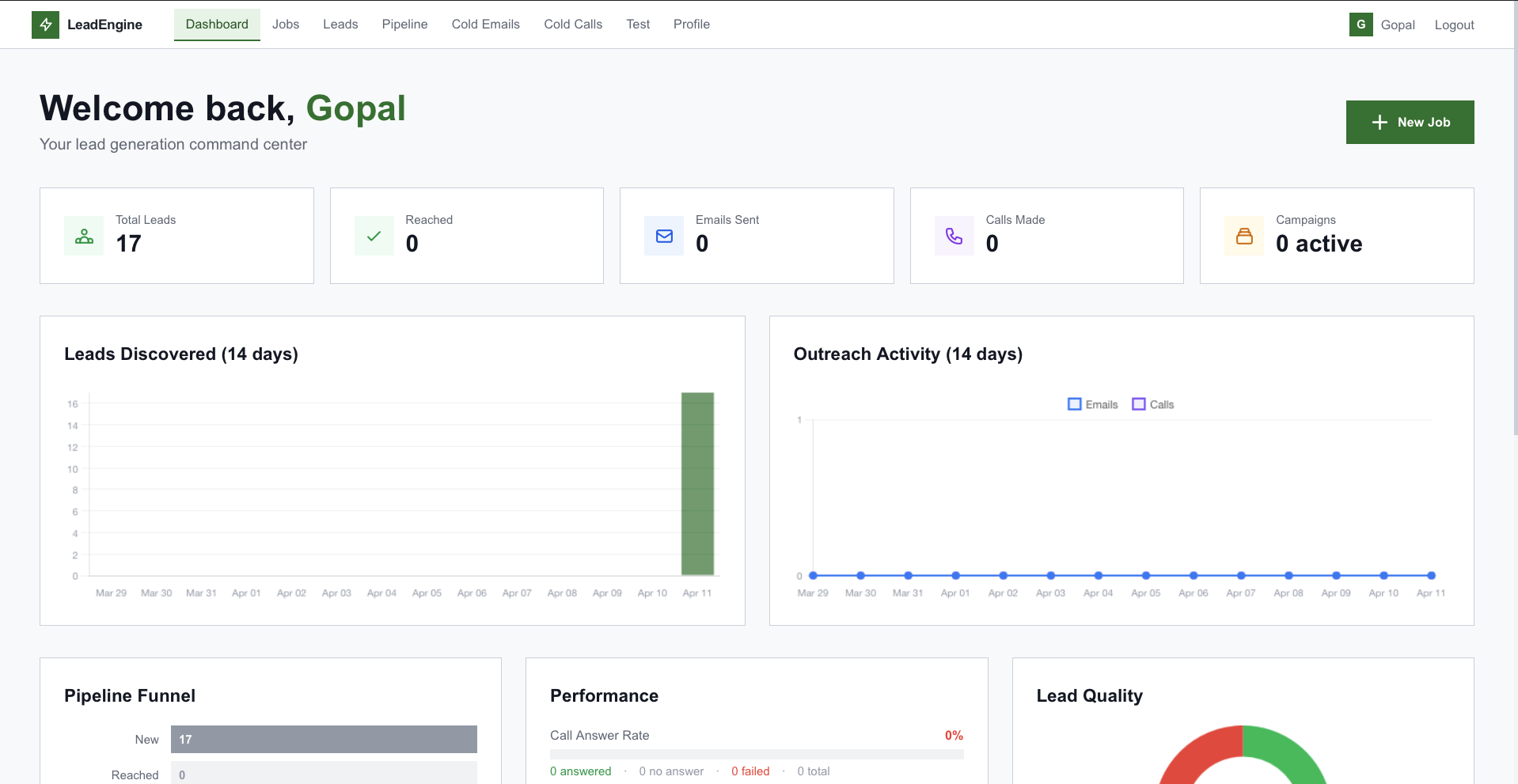

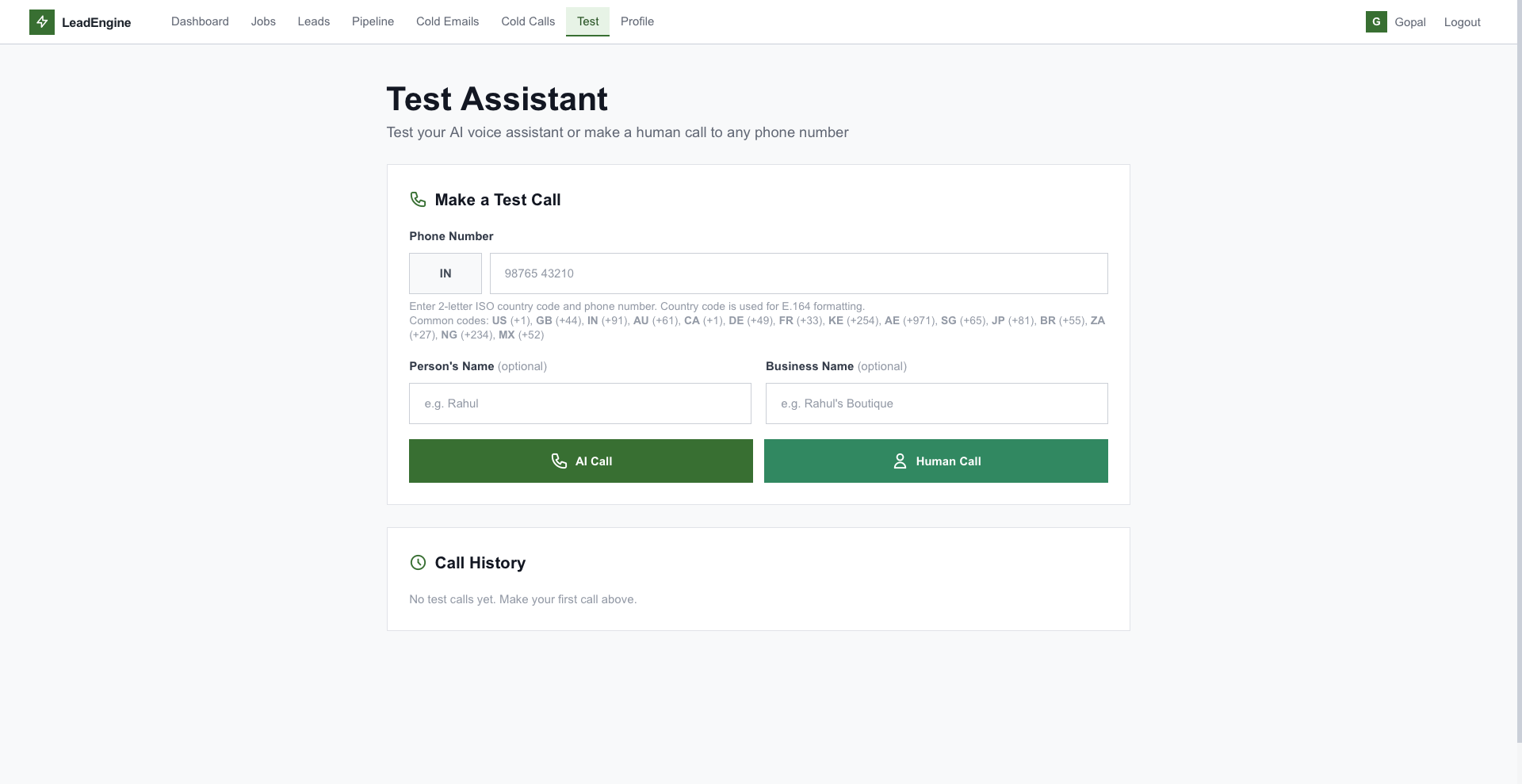

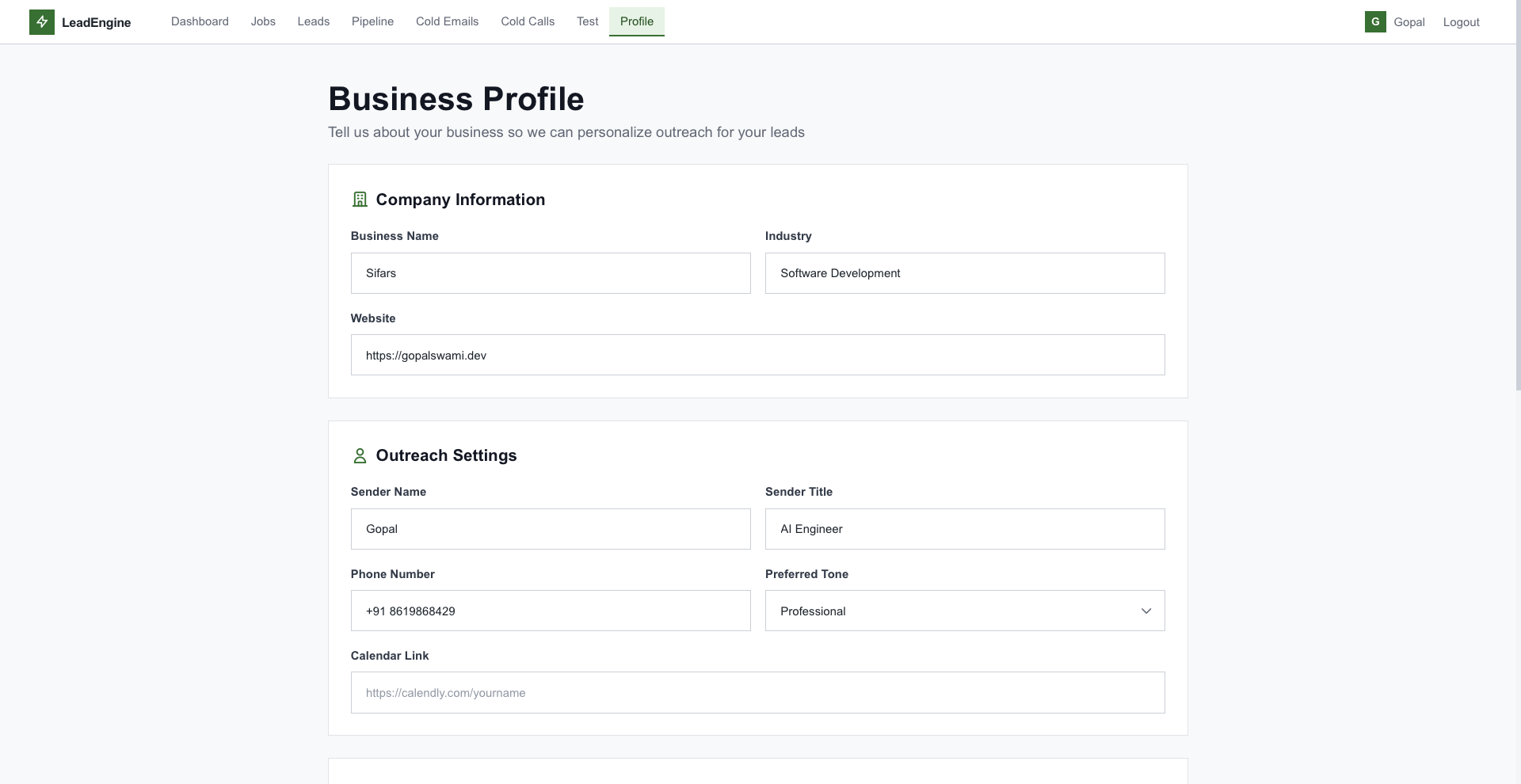

A server-rendered Jinja2 UI with TailwindCSS — dashboards, lead tables, kanban, voice test console, and more.

An AI-native platform that discovers, enriches, scores, and reaches out to B2B leads on its own — Google Places → Web scrape → Claude analysis → personalized email + AI voice calls, all from one query.

Sales reps spend 80% of their day not selling. They're copying addresses, hunting emails, researching websites, and re-writing the same outreach — one lead at a time.

Google Maps, spreadsheets, LinkedIn, Hunter, a CRM, an email client — every prospect touches half a dozen surfaces.

Building a 100-lead targeted list from scratch takes a full day — research, enrichment, dedup, contact hunting.

"Personalization" means swapping first names. Real context — recent launches, hiring, signals — gets ignored.

Most replies come on follow-up #3 — but manual sequences drop off after #1. The deals are left on the table.

A unified platform that runs the entire prospecting workflow as an autonomous, paced, auditable pipeline.

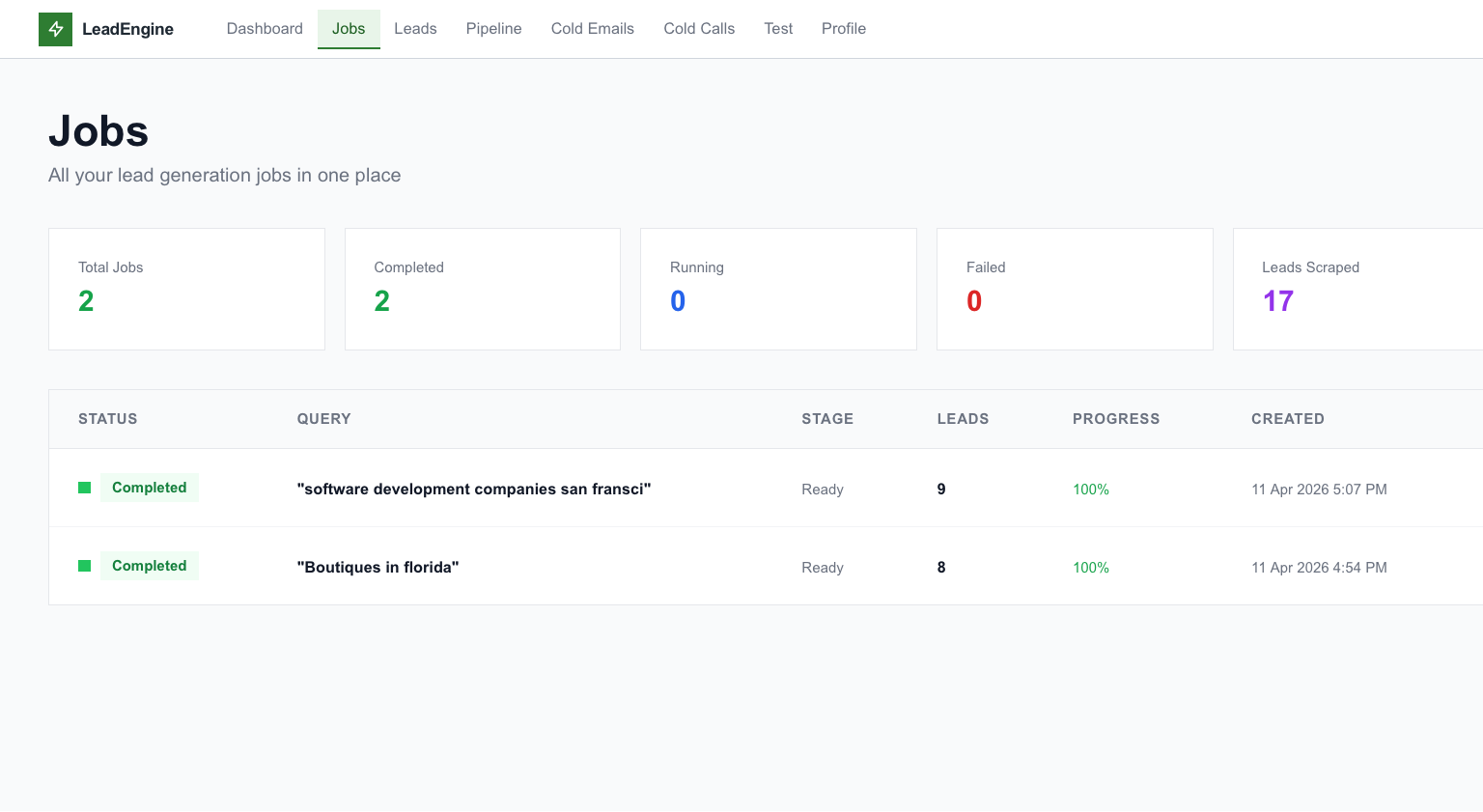

"boutiques in Texas USA." Optional filters for website, phone, max results.

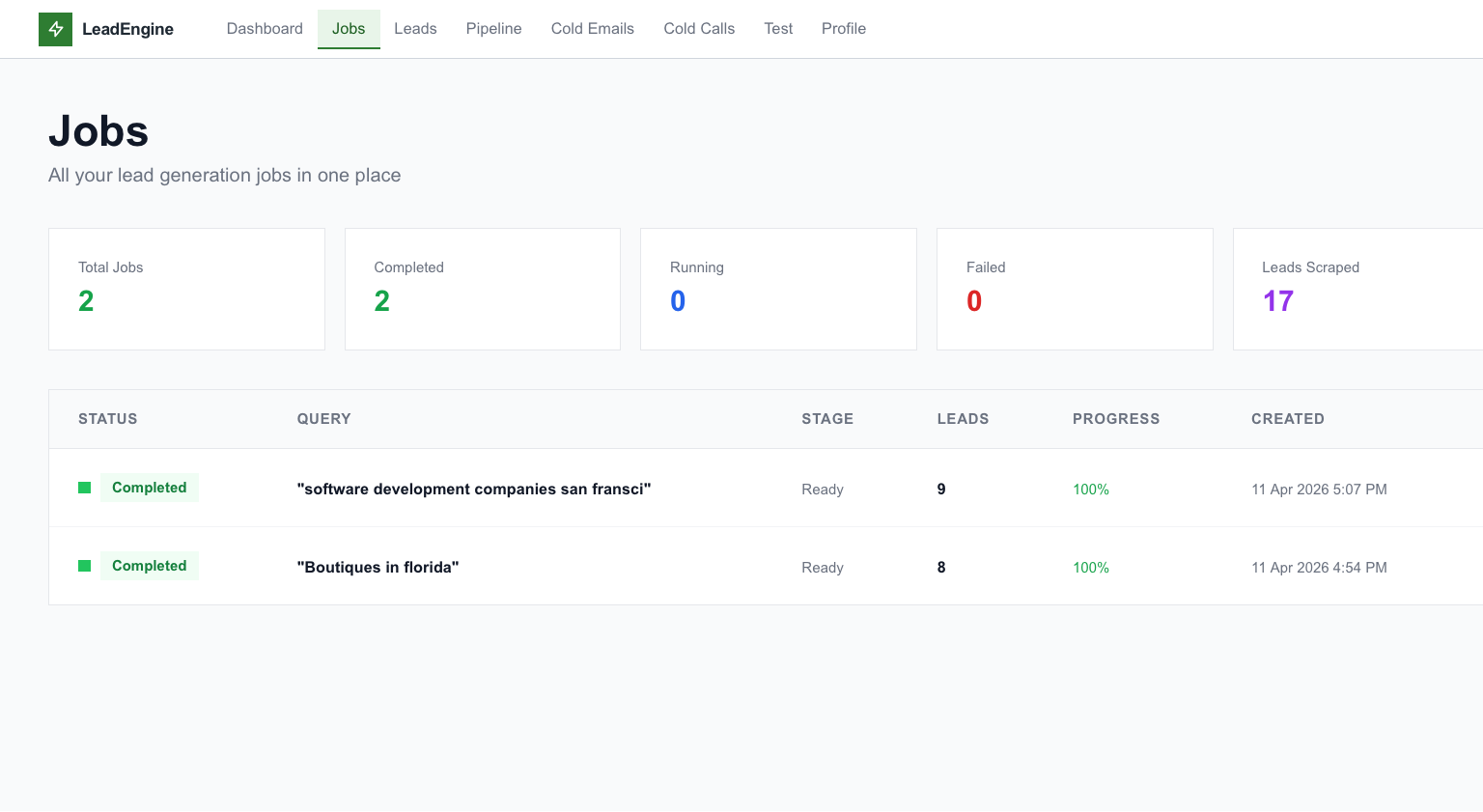

Discovery → Enrichment → Deep research → AI scoring → Ready. Async, resumable, paced.

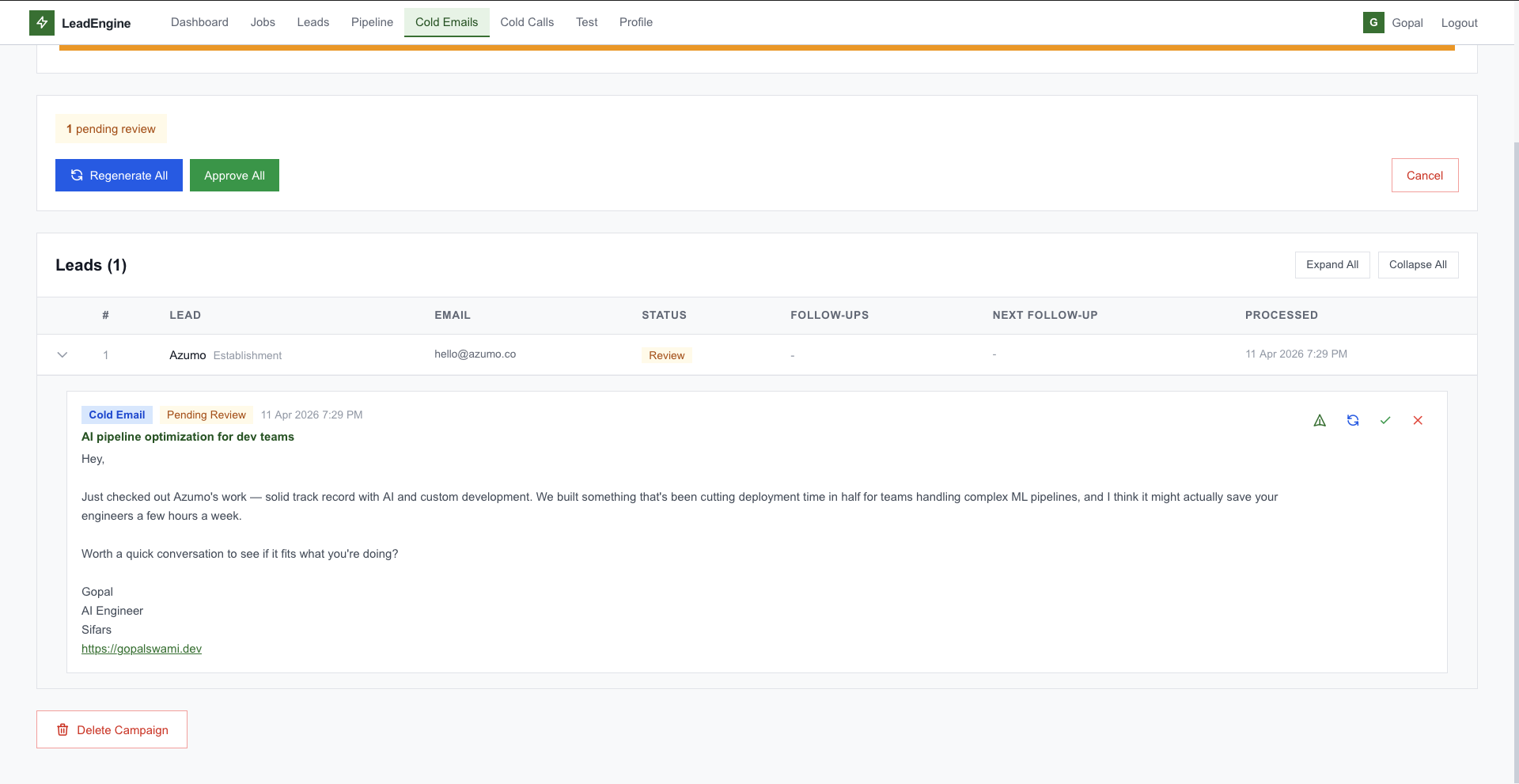

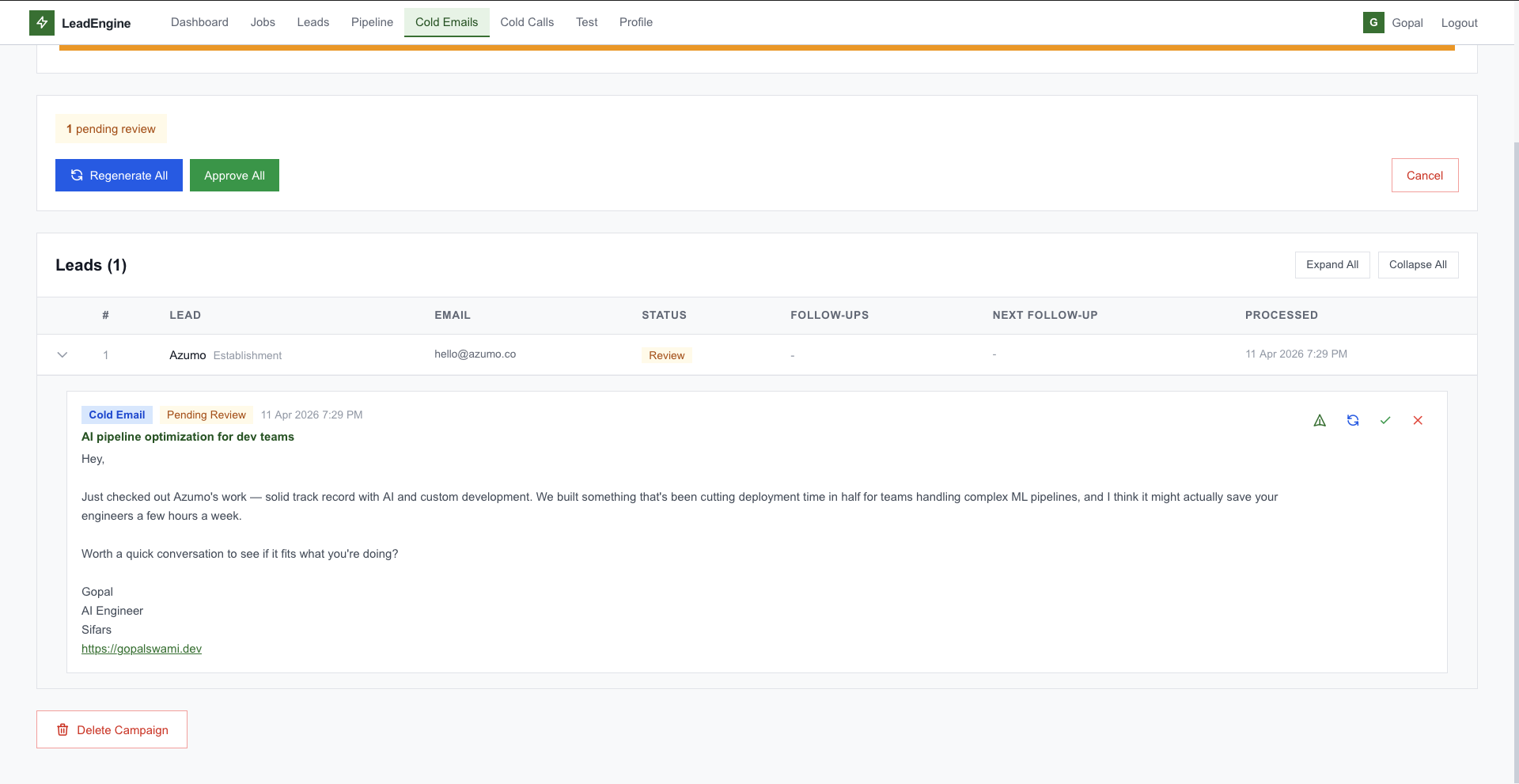

Claude writes emails and scripts using the lead's actual research — signals, products, contacts — not templates.

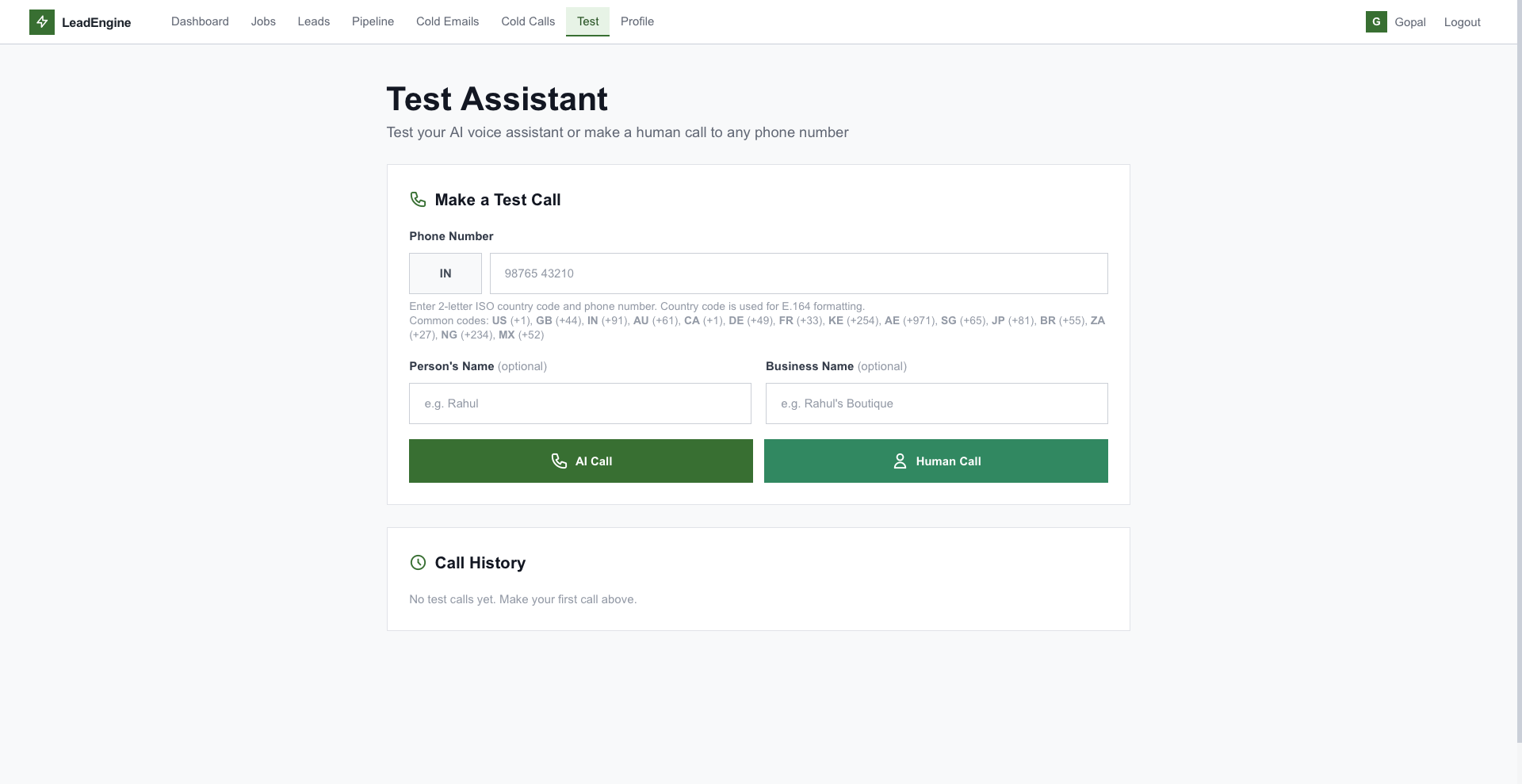

Email via SMTP, autonomous AI voice calls via Vapi, human browser calls via Twilio — paced per hour, resumable on crash.

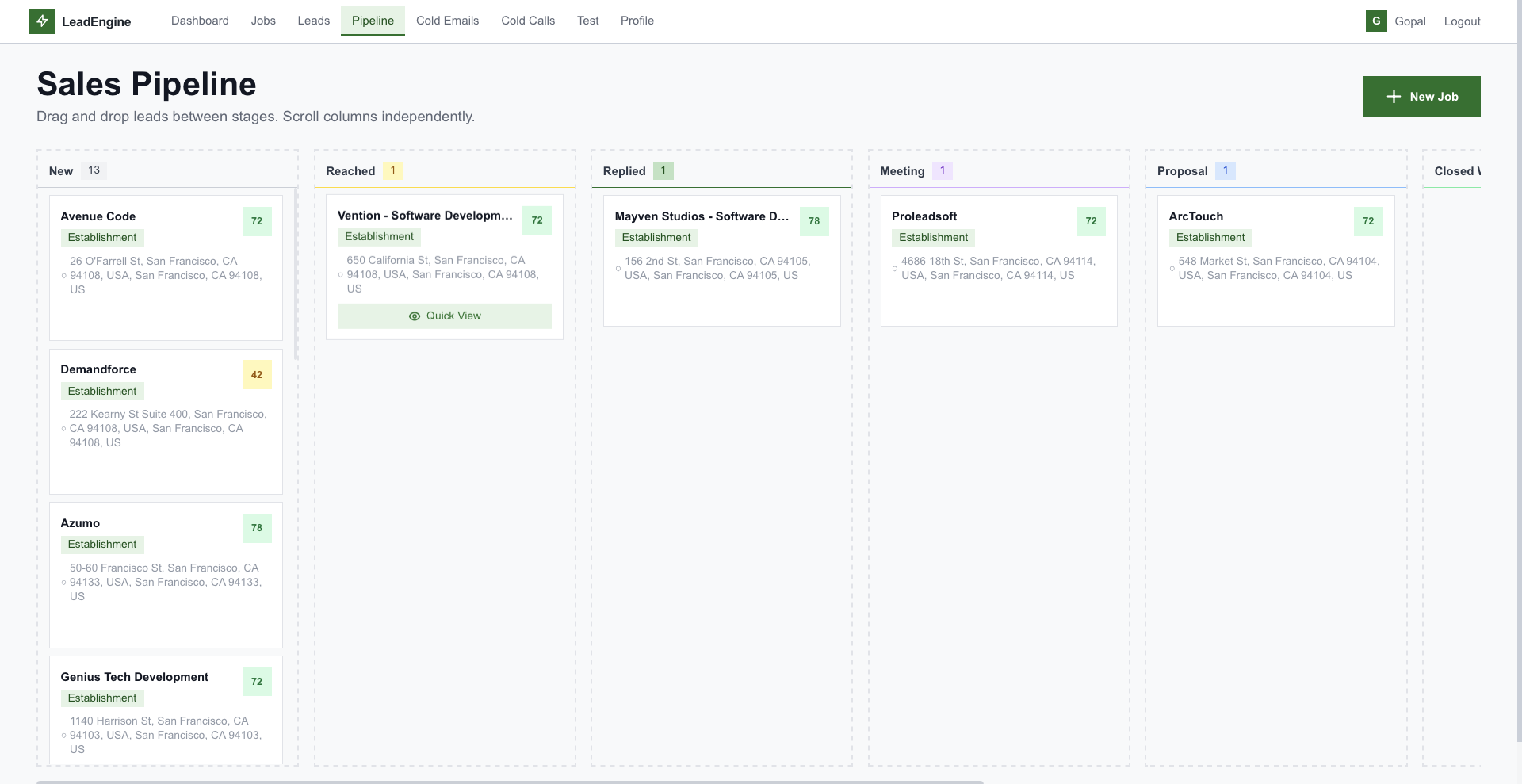

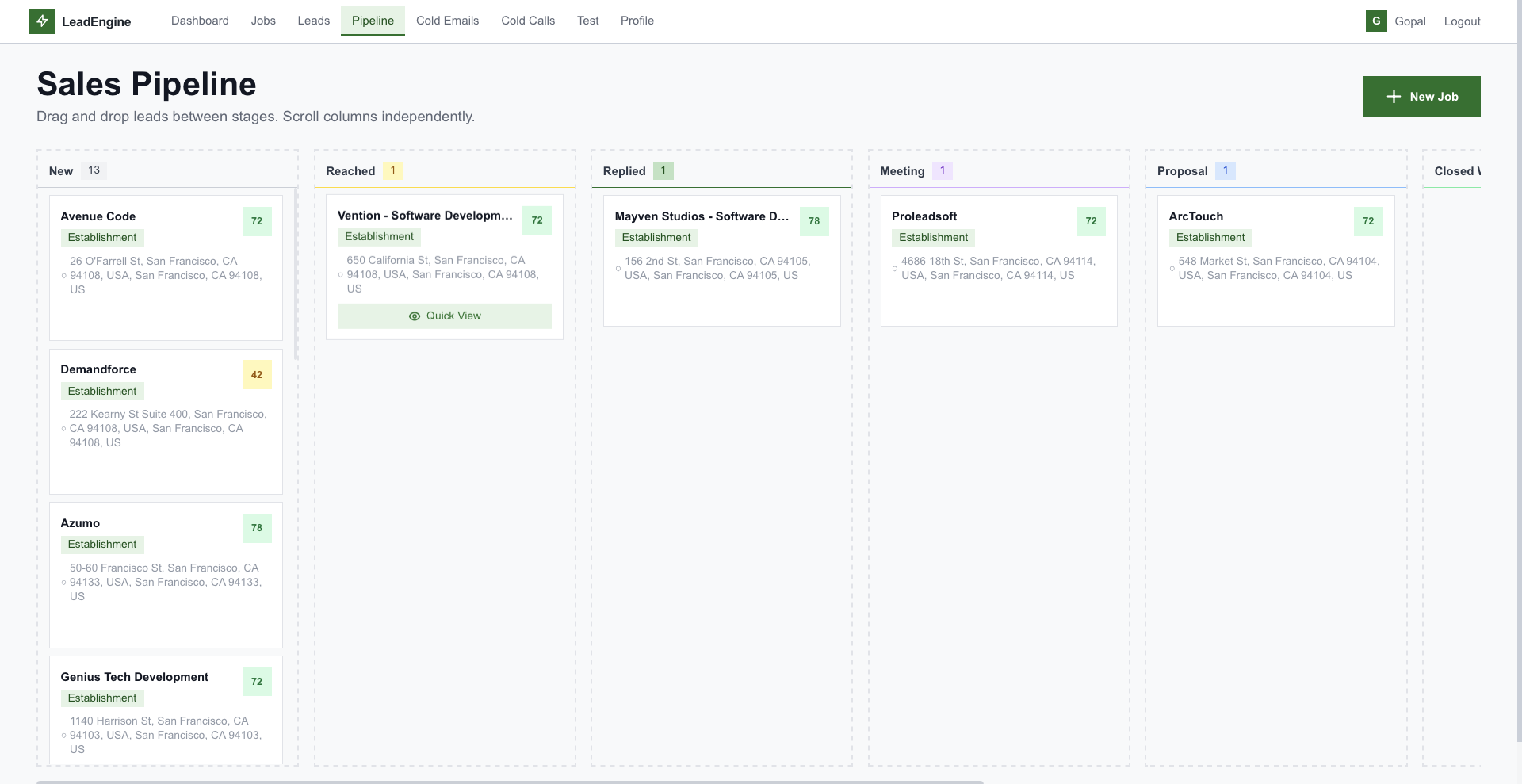

Progressive AI follow-ups every few days, kanban pipeline, full interaction audit trail for every touch.

# 5-stage lead generation pipeline

async def run_job_background(job_id: UUID):

job = await get_job(job_id)

# 1. Discovery — Google Places text search

job.current_stage = "discovery"

leads = await places_service.search_places(

query=job.query, max_results=job.max_results,

)

# 2. Enrich from Places details

async for lead in async_iter(leads):

await enrich_from_places(lead)

# 3. Deep research — scrape website

job.current_stage = "deep_research"

await asyncio.gather(*[

scraper_service.scrape_website(l.website) for l in leads

])

# 4. AI analysis — Claude Haiku 4.5

async for lead in async_iter(leads):

result = await ai_service.analyze_lead(

lead, profile=user.business_profile,

)

lead.ai_score = result["score"]

lead.intelligence = result["summary"]

# 5. Ready

job.status = "completed"

await db.commit()Clean separation between routes, controllers, services, and models — every heavy call is non-blocking, every long-running job is restart-safe.

Google Places text search with pagination, sub-query expansion via Claude, up to 500 results per job.

BeautifulSoup scraper extracts title, meta, headings, body, socials, emails, phones — stored as JSON research.

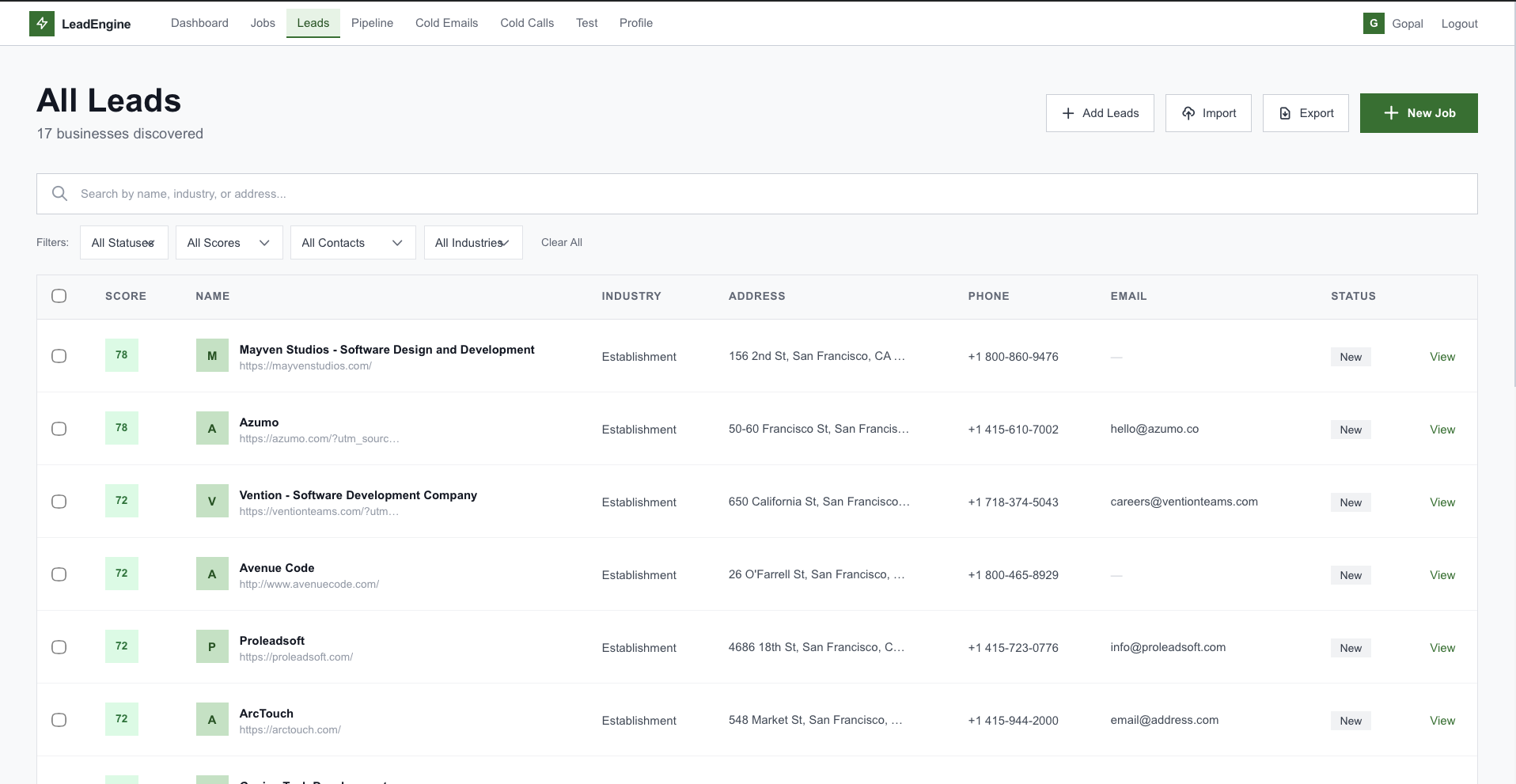

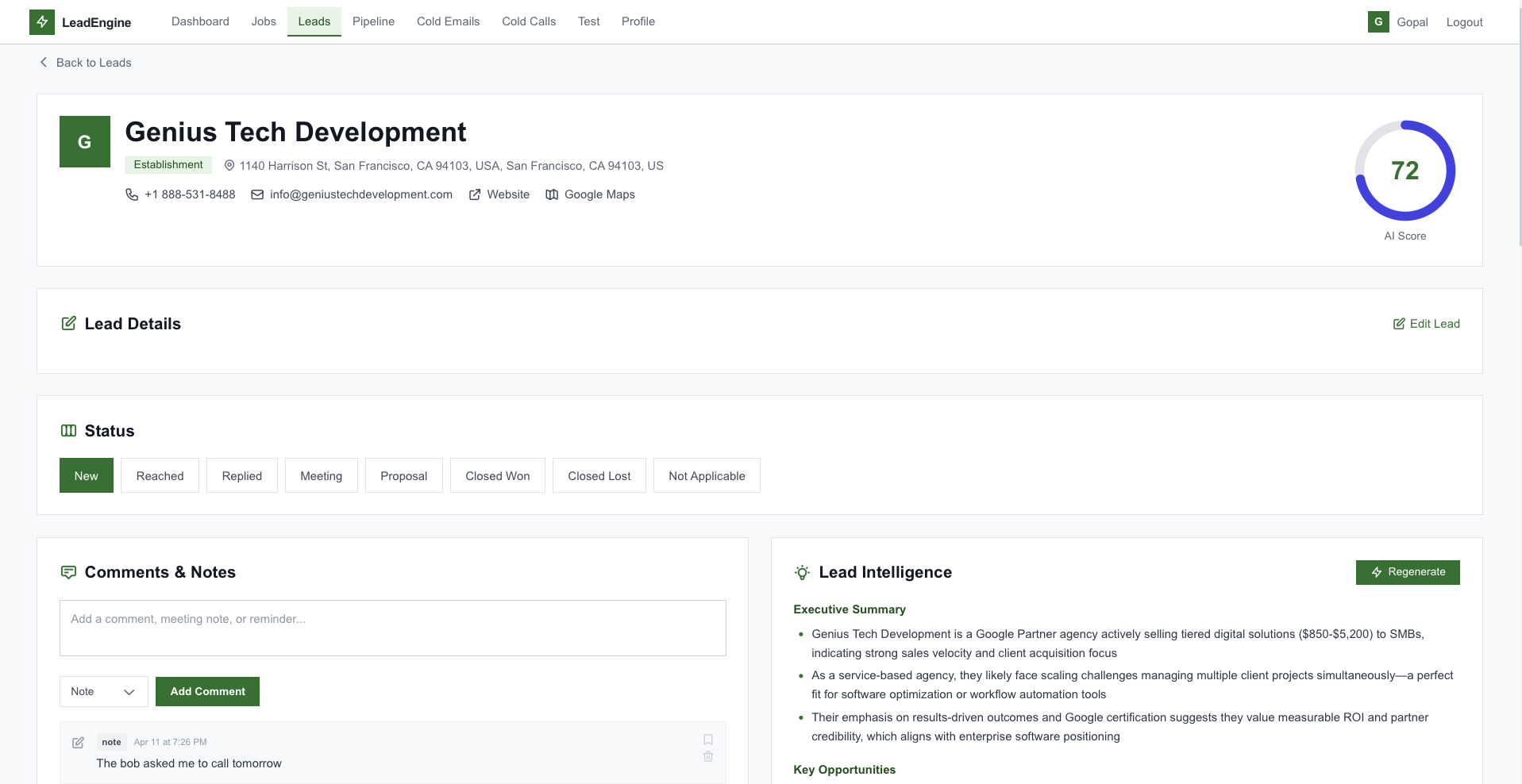

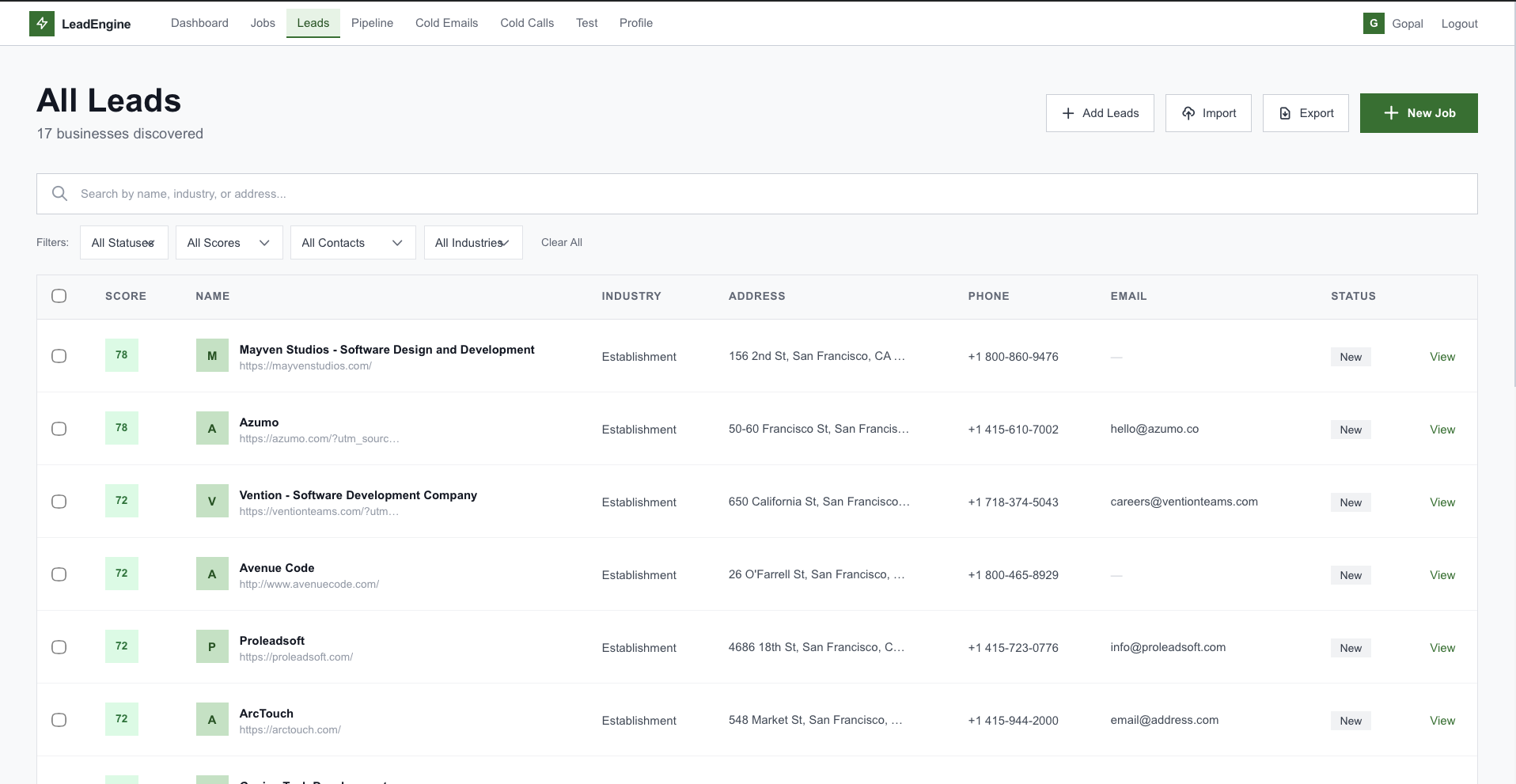

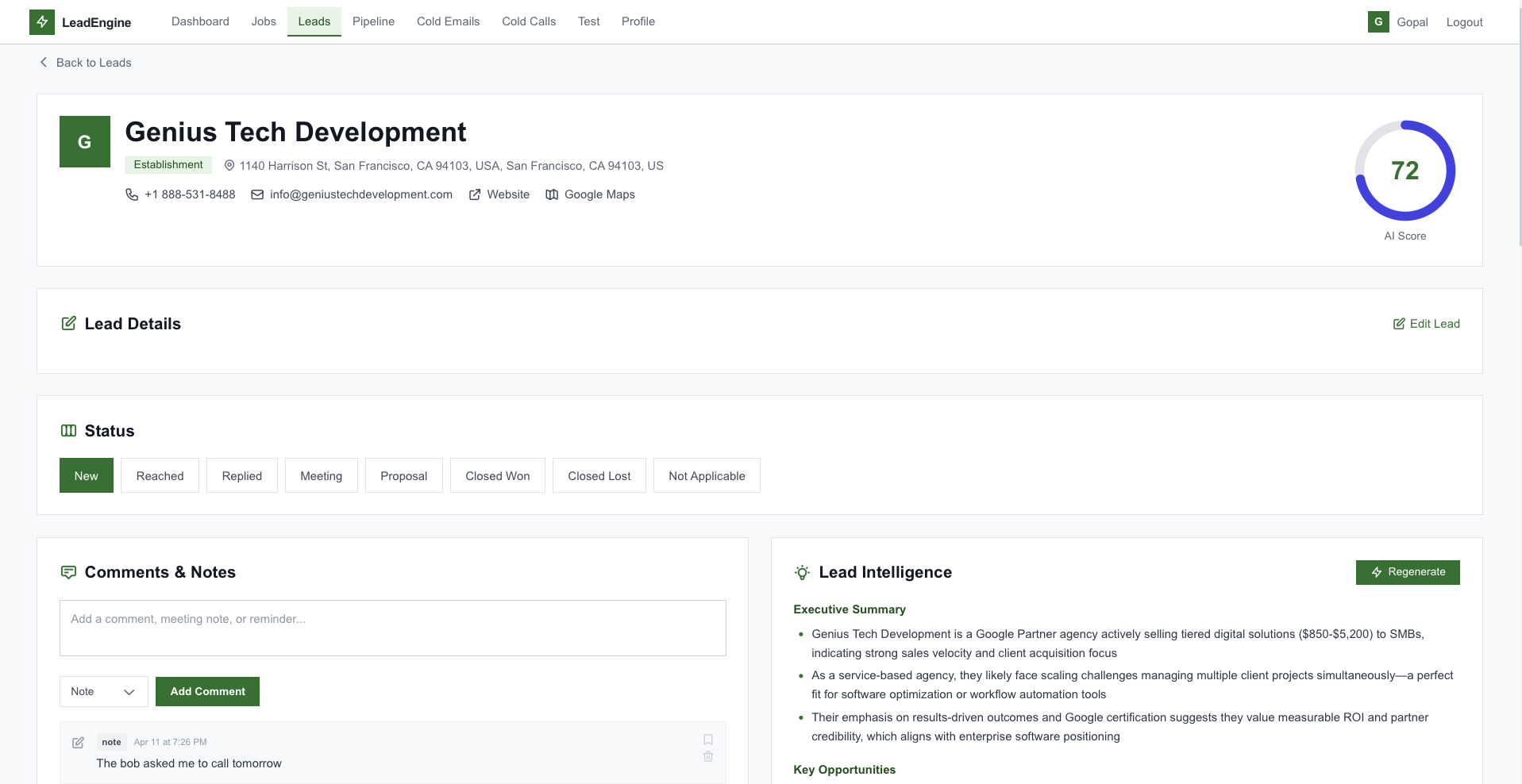

Claude scores 0–100, flags expansion signals (hiring, ecom launches), extracts key contacts and products.

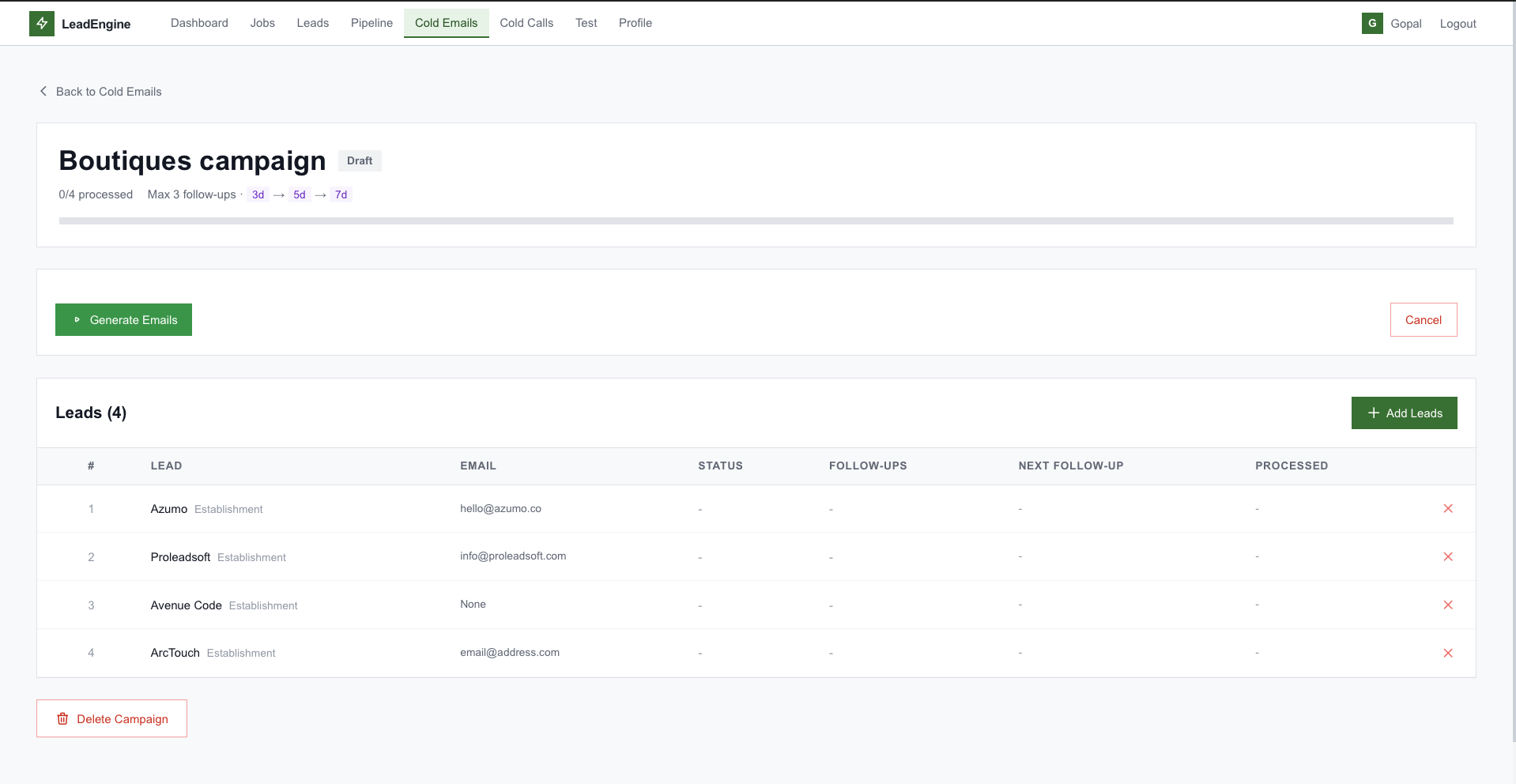

Per-lead subject + body referencing real signals. Tone selector. Plain text + HTML MIME with CTA buttons.

Autonomous cold calls with custom system prompts and per-lead context. Transcript + recording webhook.

Browser-to-PSTN via JWT token + TwiML conference. Recording, hold/resume, status webhooks.

Per-second and per-hour throttles. Resumable on crash via resume_interrupted_campaigns().

5-min scheduler generates follow-ups with full thread context. Never repeats the pitch — progressive angles.

Drag-drop kanban (new → closed) + Interaction log of every call, email, reply, bounce — full compliance.

The AI service is not just an SDK wrapper — it's a production-grade orchestrator with concurrency caps, exponential backoff, forgiving JSON parsing, and reusable task-specific prompts.

# Concurrency-controlled Claude client

_sem = asyncio.Semaphore(2)

_client = AsyncAnthropic(api_key=...)

async def _call_claude(

system: str, user: str,

max_retries: int = 4,

) -> dict:

async with _sem:

for attempt in range(max_retries):

try:

resp = await _client.messages.create(

model="claude-haiku-4-5-20251001",

max_tokens=4096,

system=system,

messages=[{"role": "user",

"content": user}],

)

return _parse_json(resp.content[0].text)

except RateLimitError:

await asyncio.sleep(3 * 2**attempt)

raise RuntimeError("Claude unavailable")"boutiques in Texas USA" · max 100 · require website. Job row created, queued.

Google Places text search, paginated. Basic data extracted — name, address, phone, website, rating, category.

BeautifulSoup scrapes each website: title, meta, headings, socials, emails, phones. Stored as JSON research blob.

Score 0–100, expansion signals, key contacts, business description, products list. Persisted on the Lead.

User filters by score, signals, industry, location. Picks the top 50 leads for a campaign.

Emails or AI voice calls, throttled per second and per hour. Every touch logged as an Interaction.

Scheduler fires every 5 min · gentle nudge → value-add → social proof → last chance. Thread-aware, never repeats.

Drag through NEW → CONTACTED → REPLIED → MEETING → PROPOSAL → CLOSED. Full interaction history on every lead card.

A server-rendered Jinja2 UI with TailwindCSS — dashboards, lead tables, kanban, voice test console, and more.

From a single search query to closed-won — discovery, enrichment, personalization, multi-channel outreach, follow-ups, and audit — all automated, paced, and restart-safe.